Homework 4¶

Due Date: Tuesday, October 27th at 11:59 PM¶

Problem 0. Homework Workflow [10 pts]

Problem 1. Motivating Automatic Differentiation [20 pts]

Problem 2. A Neural Network, Forward Mode [25 pts]

Problem 3. Visualizing Reverse Mode [10 pts]

Problem 4. A Toy AD Implementation [20 pts]

Problem 5. Continuous Integration and Coverage [15 pts]

IMPORTANT¶

Don't forget to work on Milestone 1: Milestone 1 Page.

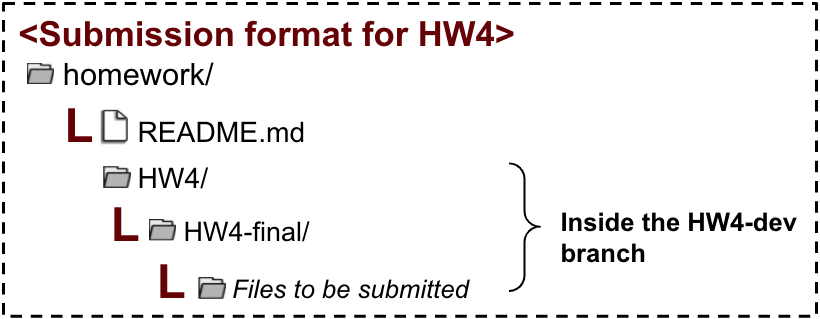

Problem 0: Homework Workflow¶

Once you receive HW3 feedback (no later than Wednesday 10/21), you will need to merge your HW3-dev branch into master.

You will earn points for following all stages of the git workflow which involves:

- 3pts for merging

HW3-devintomaster - 5pts for completing HW4 on

HW4-dev - 2pts for making a PR on

HW4-devto merge intomaster

Problem 1: Motivating Automatic Differentiation¶

For scalar functions of a single variable, the derivative is defined by $$ f'(x) = \lim_{h \rightarrow 0} \frac{f(x+h)-f(x)}{h}$$

We can approximate the derivative of a function using finite, but small values of h. All code for this problem should be contained in P1.py.

Part A: Write a Numerical Differentiation Closure¶

Write a closure called numerical_diff which takes as inputs a function (of a single variable) f and a value of h and returns a function which takes as input a value of x that computes the numerical approximation of the derivative of f with stepsize h at x.

Part B: Compare the Finite Difference to the True Derivative¶

Let $f(x) = \ln(x)$ and let $f^{\prime}\left(x\right)$ denote the first derivative of $f\left(x\right)$. Use your closure from Part A to compute $f^{\prime}_{FD}\left(x\right)$ for $0.2 \leq x \leq 0.4$ for $h=1\times 10^{-1}$, $h=1\times 10^{-7}$, and $h=1\times 10^{-15}$. Compare $f^{\prime}_{FD}\left(x\right)$ at the different values of $h$ to the exact derivative. Save your plot as P1_fig.png.

Notes:¶

- Compute the exact derivative explicitly.

- Your plot should be readable and interpretable (i.e., you should include axis labels and a legend). You may need to change the line style for all lines to be visible.

- Your plot will contain four lines:

- Analytical derivative

- $f^{\prime}_{FD}\left(x\right)$ for $h=1\times 10^{-1}$

- $f^{\prime}_{FD}\left(x\right)$ for $h=1\times 10^{-7}$

- $f^{\prime}_{FD}\left(x\right)$ for $h=1\times 10^{-15}$

Part C: Why Automatic Differentiation?¶

Answer the following questions using two print statements to display your answers. These can be placed at the very end of P1.py (but before plt.show()). Each print statement should start with the string: "Answer to Q-i:" where i is either a or b to reference the questions below.

- Q-a: Which value of $h$ most closely approximates the true derivative? What happens for values of $h$ that are too small? What happens for values of h that are too large?

- Q-b: How does automatic differentiation address these problems?

Deliverables¶

P1.pyP1_fig.png

Problem 2: A Neural Network, Forward Mode¶

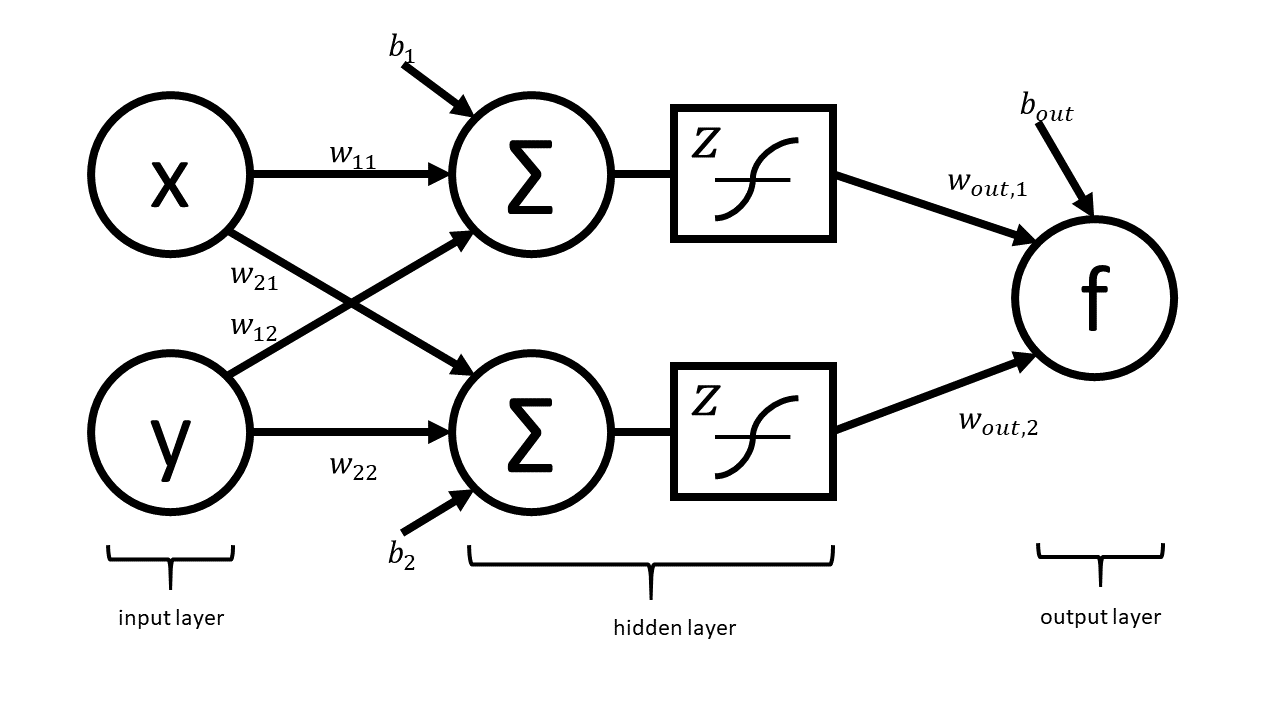

Artificial neural networks take as input the values of an input layer of neurons and combine these inputs in a series of layers to compute an output. A small network with a single hidden layer is drawn below.

This network can be expressed in matrix notation as $$f\left(x,y\right) = w_{\text{out}}^{T}z\left(W\begin{bmatrix}x \\ y \end{bmatrix} + \begin{bmatrix}b_{1} \\ b_{2}\end{bmatrix}\right) + b_{\text{out}}$$ where $$W = \begin{bmatrix} w_{11} & w_{12} \\ w_{21} & w_{22}\end{bmatrix}$$ is a (real) matrix of weights, $$w_{\text{out}} = \begin{bmatrix} w_{\text{out},1} \\ w_{\text{out},2} \end{bmatrix}$$ is a vector representing output weights, $b_i$ are bias terms, and $z$ is a nonlinear function that acts component-wise.

The above graph helps us visualize the computation in different layers. This visualization hides many of the underlying operations which occur in the computation of $f$ (e.g. it does not explicitly express the elementary operations).

Your Tasks¶

In this part, you will completely neglect the biases, $b_{i}$ and $b_{\text{out}}$. The mathematical form is therefore $$f\left(x,y\right) = w_{\text{out}}^{T}z\left(W\begin{bmatrix}x \\ y \end{bmatrix}\right).$$ Note that in practical applications the biases play a key role. However, we have elected to neglect them in this problem so that your results are more readable. You will complete the two steps below while neglecting the bias terms.

- As we have done in lecture, draw the complete forward graph. You may treat $z$ as a single elementary operation. Submit a picture of the graph as

P2_graph.png. This picture can be a picture taken of your graph drawn on a piece of paper or it can be something that you draw electronically. - Use your graph to write out the full forward mode table and submit a picture of the table as

P2_table.png. This table can, once again, be done on a piece of paper or in markdown.

Submission Notes:¶

- For the graph, you should explicitly show the multiplications and additions that are masked in the schematic of the network above.

- You should relabel the nodes of the graph with traces (e.g. $x_{13}$) as we have done in class.

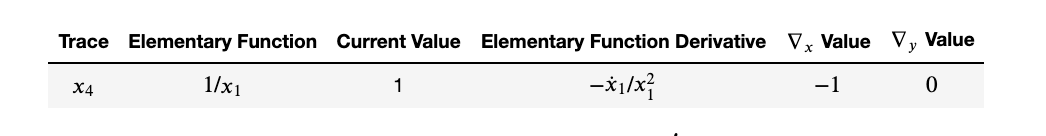

- Your table should include columns for the trace, elementary function, current function value, elementary function derivative, partial x derivative, and partial y derivative. Here is an example table with a row filled in (Note: Your table will not contain this exact row. Your table will have something else for $x_{4}$.)

- The values in your table should be in terms of $w_{1, out}, w_{2, out}, w_{11}, w_{12}, w_{21}, w_{22}, z,$ and $z^{\prime}$, where $\prime$ denotes a derivative.

- Pictures of handwritten graphs and tables are fine but make sure that these are legible.

- Table values should also be in terms of $x$ and $y$.

Deliverables¶

P2_graph.pngP2_table.png

Problem 3: Visualizing Reverse Mode¶

This problem provides you with a tool to help visualize automatic differentiation. This automatic differentiation code base and GUI originated as the fall 2018 CS207 final project extension for Lindsey Brown, Xinyue Wang, and Kevin Yoon, and it has undergone further development. It is meant to be a resource for you as you learn automatic differentiation.

Part A: Preview of Virtual Environments¶

In lecture, we will be learning about how to use virtual environments to create workspaces to use only certain packages. For this problem, we will walk through how to set up a virtual environment using conda to create a workspace to contain a GUI to help with the visualization of the automatic differentiation functions.

- Set up a virtual environment. Use the command

conda create -n env_name python=3.6 anacondawhereenv_nameis a name of your choosing for your virtual environment. - Activate your virtual environment, using

source activate env_name.- Note: Some systems might given an error here and ask you to execute a different sequence of commands. If this happens, please follow the commands suggested in your terminal.

- Clone the Auto-eD repository:

git clone https://github.com/lindseysbrown/Auto-eD.git. - Change into the new directory,

cd Auto-eD. - Install the dependencies using

pip install -r requirements.txt.

Part B: Visualize Backward Mode for the Neural Network¶

For this part, we will use the simplified neural network model (no bias terms) from Problem 2 again. For this part only (Problem 3B), take $w_{out} = \begin{bmatrix} 1 \\ 2 \end{bmatrix}$, $W = \begin{bmatrix} 1.1 & 1.2 \\ 2.1 & 2.2 \end{bmatrix}$, and $z$ to be the identity.

To do so you'll be inputing $f(x,y)$ into either the command line (option A) or the GUI (option B), so you will need $f(x,y)$ in scalar form. (Hint: This should be the last line of your evaluation table.) Do not simplify by multiplying the weights togther. Each of the 6 constants should appear in the function expression exactly once. This will look strange, but it is the way that you should do the problem.

Visualize the reverse mode through one of the two following options.

Option A: Run Auto-eD Locally¶

- Run the file using

python ADappHeroku.py. If you get aImportError: Python is not installed as a framework.error, try runningpythonw ADappHeroku.pyinstead. - Go to

http://0.0.0.0:5000/in your web browser - Follow the input instructions. You are free to choose any $x$ and $y$ value at which to evaluate the function and its derivatives.

- Save the reverse mode graph as

P3_graph.png. - Deactivate your virtual environment using

conda deactivate.

Option B: Use the Website¶

Note: Due to server limitations, the Auto-eD website only allows for a low number of simultaneous sessions, so there may be errors or latency. We'd prefer you ran the package locally.

- Go to https://autoed.herokuapp.com/ in your web browser.

- Follow the input instructions. You are free to choose any $x$ and $y$ value at which to evaluate the function and its derivatives.

- Save the reverse mode graph as

P3_graph.png. - Deactivate your virtual environment using

conda deactivate.

Deliverables¶

P3_graph.png

Notes:¶

- Please use the input calculator instead of typing the equation into the text box

- Please explicitly input multiplication (e.g. input

4*xand not4x) - The app may display a simplified equation after you press

calculate. The reverse mode, however, will still show all operations you input. Please do not simplify the equation as you input it.

Problem 4: A Toy AD Implementation¶

You will write a toy forward automatic differentiation class. Write a class called AutoDiffToy that can return the derivative (with respect to $x$) of functions of the form $$f = \alpha x + \beta$$ for constants $\alpha, \beta \in \mathbb{R}$.

Interface¶

- Must contain a constructor that sets the value of the function and derivative

- This would be like the first row in the evaluation trace tables that we've been making.

- Must overload functions where appropriate.

- Note: Python's

__add__(self, other)and__mul__(self, other)methods are meant to be defined for objects of the same type. Your implementation should not assume thatotheris a real number but be robust enough to handle the case when it is.

- Note: Python's

- Handle exceptions appropriately.

- This is a good place to use (and practice) duck-typing. For example, rather than checking if an argument to a special method is an instance of the object, instead use a

try-exceptblock, catch anAttributeErrorand do the appropriate calculation. - Hint: Asking Forgiveness

- This is a good place to use (and practice) duck-typing. For example, rather than checking if an argument to a special method is an instance of the object, instead use a

Use Case¶

a = 2.0 # Value to evaluate at

x = AutoDiffToy(a)

alpha = 2.0

beta = 3.0

f = alpha * x + beta

Output¶

print(f.val, f.der)

7.0 2.0

Requirements¶

- Implementation must be robust enough to handle functions written in the form

f = alpha * x + beta f = x * alpha + beta f = beta + alpha * x f = beta + x * alpha

- You should demo your code with an example for each of these 4 cases.

Deliverables¶

P4.pycontaining your class and demo

Problem 5 [15 pts]: Continuous Integration¶

Note: You will not be able to start this problem until after lecture 12.

We discussed documentation and testing in lecture (or will very soon) and also briefly touched on code coverage. You must write tests for your code in your final project (and in life). There is a nice way to automate the testing process called continuous integration (CI). This problem will walk you through the basics of CI and show you how to get up and running with some CI software.

The idea behind continuous integration is to automate aspects of the testing process.

The basic workflow goes something like this:

- You work on your part of the code in your own branch or fork.

- On every commit you make and push to GitHub, your code is automatically tested by an external service (e.g. Travis CI). This ensures that there are no specific dependencies on the structure of your machine that your code needs to run and also ensures that your changes are sane.

- When you want to merge your changes with the master / production branch you submit a pull request to

masterin the main repo (the one you're hoping to contribute to). The repo manager creates a branch offmaster. - This branch is also set to run tests on Travis. If all tests pass, then the pull request is accepted and your code becomes part of master.

In this problem, we will use GitHub to integrate our roots library with Travis CI and CodeCov. (Note that this is not the only workflow people use.)

Part A:¶

Create a public GitHub repo called cs107test and clone it to your local machine. (Note: This should be done outside your course repo.)

Part B:¶

Use the example from lecture 12 to create a file called roots.py, which contains the quad_roots and linear_roots functions (along with their documentation). Now, also create a file called test_roots.py, which contains the tests from lecture.

All of these files should be in your newly created cs107test repo. Don't push yet!!!

Part C: Create an account on Travis CI and Start Building¶

Create an account on Travis CI and set your cs107test repo up for continuous integration once this repo can be seen on Travis.

Part D:¶

Create an instruction to Travis to make sure that

- python 3.6 is installed

- pytest is installed

The file should be called .travis.yml and should have the contents (Note: The yml line should not be present in your file. This is a rendering issue with the html. Everything below yml should be in your file. Please download and open the Jupyter notebook to see the correct format with synatx highlighting.):

yml

language: python

python:

- "3.6"

before_install:

- pip install pytest pytest-cov

script:

- pytestPart E:¶

Push the new changes to your cs107test repo.

At this point you should be able to see your build on Travis and if and how your tests pass.

Part F: CodeCov Integration¶

In class, we also discussed code coverage. Just like Travis CI runs tests automatically for you, CodeCov automatically checks your code coverage.

Create an account on CodeCov, connect your GitHub, and turn CodeCov integration on.

Part G:¶

Update your the .travis.yml file as follows (Note: The yml line should not be present in your file. This is a rendering issue with the html. Everything below yml should be in your file. Please download and open the Jupyter notebook to see the correct format with synatx highlighting.):

yml

language: python

python:

- "3.6"

before_install:

- pip install pytest pytest-cov

- pip install codecov

script:

- pytest --cov=./

after_success:

- codecovBe sure to push the latest changes to your new repo.

Part H:¶

You can have your GitHub repo reflect the build status on Travis CI and the code coverage status from CodeCov. To do this, you should modify the README.md file in your repo to include some badges. Put the following at the top of your README.md file:

[](https://travis-ci.org/dsondak/cs207testing.svg?branch=master)

[](https://codecov.io/gh/dsondak/cs207testing)Of course, you need to make sure that the links are to your repo and not mine. You can find embed code on the CodeCov and Travis CI sites.

Deliverables¶

P5.mdwhich contains a link to your publiccs107testrepo. The course staff will grade theREADMEin that repository.