Key Word(s): Autoencoders

CS-109B Data Science 2: Advanced Topics in Data Science

CS-109B Data Science 2: Advanced Topics in Data Science

Lab 7: Autoencoders¶

Harvard University

Spring 2020

Instructors: Mark Glickman, Pavlos Protopapas, and Chris Tanner

Lab Instructors: Chris Tanner and Eleni Angelaki Kaxiras

Content: Eleni Angelaki Kaxiras, Vivek Hv, Cedric Flamant, and Pavlos Protopapas

# RUN THIS CELL TO PROPERLY HIGHLIGHT THE EXERCISES

import requests

from IPython.core.display import HTML

styles = requests.get("https://raw.githubusercontent.com/Harvard-IACS/2019-CS109B/master/content/styles/cs109.css").text

HTML(styles)

Learning Goals¶

By the end of this lab, you should be able to:

- Connect the representation that Principal Component Analysis produces to the that of an autoencoder (AE).

- Add tf.keras Functional API into your machine learning arsenal.

- Implement an autoencoder using

tf.keras:- build the encoder network/model

- build the decoder network/model

- decide on the latent/bottleneck dimension

- train your AE

- predict on unseen data

Note: To see solutions, uncomment and run the following:¶

# %load solutions/exercise2.pyFirst time you run will load solution, then you need to run the cell again to actually run the code.

Table of Contents¶

from __future__ import annotations

import numpy as np

import seaborn as sns

import os

import datetime

import matplotlib.pyplot as plt

plt.rcParams["figure.figsize"] = (5,5)

%matplotlib inline

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras.models import Sequential, Model

from tensorflow.keras.layers import Dense, Conv2D, Conv1D, MaxPooling2D, MaxPooling1D,\

Dropout, Flatten, Activation, Input, UpSampling2D

from tensorflow.keras.optimizers import Adam, SGD, RMSprop

from tensorflow.keras.utils import to_categorical

from tensorflow.keras.metrics import AUC, Precision, Recall, FalsePositives, \

FalseNegatives, TruePositives, TrueNegatives

from tensorflow.keras.preprocessing import image

from tensorflow.keras.regularizers import l2

tf.keras.backend.clear_session() # For easy reset of notebook state.

print(tf.__version__) # You should see a > 2.0.0 here!

from tf_keras_vis.utils import print_gpus

print_gpus()

# set the seed for reproducability of results

seed = 109

np.random.seed(seed)

tf.random.set_seed(seed)

# install this if you want to play around with Tensorboard

#!pip install tf-keras-vis tensorflow

%load_ext tensorboard

# remove old logs

!rm -rf ./logs/

Part 1: Autoencoders and the connection to Principal Component Analysis¶

Principal Component Analysis (PCA)¶

PCA decomposes a multivariate dataset in a set of eigenvectors - successive orthogonal coeefficients that explain a large amount of the variance. By using only a number of the highest valued vectors, let's say $N$ of them, we effectively reduce the dimensionality of our data to $N$ with minimal loss of information as measured by RMS (Root Mean Squared) Error.

PCA in sklearn is a transformer that learns those $N$ components via its .fit method. It can then be used to project a new data object in these components. Remember from 109a that we always .fit only to the training set and .transform both training and test set.

from sklearn.decomposition import PCA

k = 2 # number of components that we want to keep

X_train, X_test = load_data()

pca = PCA(n_components=k)

principal_components = pca.fit_transform(X_train)

principal_components = pca.transform(X_test)Autoencoders (AE)¶

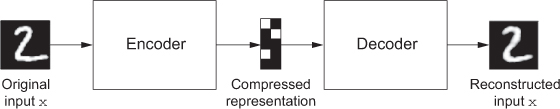

image source: Deep Learning by Francois Collet

An AE maps its input, usually an image, to a latent vector space via an encoder function, and then decodes it back to an output that is the same as the input, via a decoder function. It’s effectively being trained to reconstruct the original input. By trying to minimize the reconstruction MSE error, on the output of the encoder, you can get the autoencoder to learn interesting latent representations of the data. Historically, autoencoders have been used for tasks such as dimentionality reduction, feature learning, and outlier detection.

One type of architecture for an AE is to have the decoder network be a 'mirror image' of the encoder. It makes more sense this way but it is not necessary.

We can say that AEs are self-supervised learning networks!

Understandind the connection between PCA and AEs¶

If the hidden and output layers of an autoencoder are linear, the autoencoder will learn hidden units that are linear representations of the data, just like PCA does. If we have $M$ hidden units in our AE, those will span the same space as the $M$ first principal components. The hidden layers of the AE will not produce orthogonal representations of the data as PCA would but if we add non-linear components in our encoder-decoder networks we can represent a non-linear space/manifold;

Fashion-MNIST¶

We will use the dataset of clothing article images (created by Zalando), consisting of a training set of 60,000 examples and a test set of 10,000 examples. Each example is a 28 x 28 grayscale image, associated with a label from 10 classes. The names of the classes corresponding to numbers 0-9 are:

'T-shirt/top', 'Trouser', 'Pullover', 'Dress', 'Coat','Sandal', 'Shirt', 'Sneaker', 'Bag', and 'Ankle boot'The creators intend Fashion-MNIST to serve as a direct drop-in replacement for the original MNIST dataset for benchmarking machine learning algorithms. It shares the same image size and structure of training and testing splits. Each pixel is 8 bits so its value ranges from 0 to 255.

Let's load and look at it!

# get the data from keras - how convenient!

fashion_mnist = tf.keras.datasets.fashion_mnist

# load the data splitted in train and test! how nice!

(X_train, y_train),(X_test, y_test) = fashion_mnist.load_data()

# normalize the data by dividing with pixel intensity

# (each pixel is 8 bits so its value ranges from 0 to 255)

X_train, X_test = X_train / 255.0, X_test / 255.0

print(f'X_train shape: {X_train.shape}, X_test shape: {X_test.shape}')

print(f'y_train shape: {y_train.shape}, and y_test shape: {y_test.shape}')

# classes are named 0-9 so define names for plotting clarity

class_names = ['T-shirt/top', 'Trouser', 'Pullover', 'Dress', 'Coat',

'Sandal', 'Shirt', 'Sneaker', 'Bag', 'Ankle boot']

plt.figure(figsize=(10,10))

for i in range(25):

plt.subplot(5,5,i+1)

plt.xticks([])

plt.yticks([])

plt.grid(False)

plt.imshow(X_train[i], cmap=plt.cm.binary)

plt.xlabel(class_names[y_train[i]])

plt.show()

# choose one image to look at

i = 5

plt.imshow(X_train[i], cmap='gray');

plt.xlabel(class_names[y_train[i]]);

## your code here

# %load solutions/exercise1.py

from sklearn.decomposition import PCA

# your code here

# %load solutions/exercise2.py

# print the variance explained by those components

pca.explained_variance_

Note: The first two components explain ~19+12 = 31% of the variance.

# transform the train and test set

X_train_pca = pca.transform(X_train_flat)

X_test_pca = pca.transform(X_test_flat)

X_train_pca.shape, X_train_pca[1:5,0].shape

class_names = ['T-shirt/top', 'Trouser', 'Pullover', 'Dress', 'Coat',

'Sandal', 'Shirt', 'Sneaker', 'Bag', 'Ankle boot']

fig, ax1 = plt.subplots(1,1, figsize=(9,9))

sns.scatterplot(x=X_train_pca[:,0], y=X_train_pca[:,1], hue=y_train,

palette=sns.color_palette("deep", 10), ax=ax1)

ax1.set_title("FASHION MNIST, First 2 principal components");

Part 2: Denoise Images using AEs¶

We will create an autoencoder which will accept "noisy" images as input and try to produce the original images. Since we do not have noisy images to start with, we will add random noise to our Fashion-MNIST images. To do this we will use the image augmentation library imgaug docs.

From this library we will use SaltAndPepper, an augmenter which replaces pixels in images with salt/pepper noise (white/black-ish colors), randomly with probability passed as parameter p. Use the code below to install the library in your virtual environment.

# !conda install imgaug

# ### OR

# !pip install imgaug

from imgaug import augmenters

# NNs want the inputs to be 4D

X_train = X_train.reshape(-1, h, w, 1)

X_test = X_test.reshape(-1, h, w, 1)

# Lets add sample noise - Salt and Pepper

noise = augmenters.SaltAndPepper(p=0.1, seed=seed)

seq_object = augmenters.Sequential([noise])

# Augment the data (add the noise)

X_train_n = seq_object.augment_images(X_train * 255) / 255

X_test_n = seq_object.augment_images(X_test * 255) / 255

f, ax = plt.subplots(1,5, figsize=(20,10))

for i in range(5,10):

ax[i-5].imshow(X_train_n[i, :, :, 0].reshape(28, 28), cmap=plt.cm.binary)

ax[i-5].set_xlabel('Noisy '+class_names[y_train[i]])

f, ax = plt.subplots(1,5, figsize=(20,10))

for i in range(5,10):

ax[i-5].imshow(X_train[i, :, :, 0].reshape(28, 28), cmap=plt.cm.binary)

ax[i-5].set_xlabel('Clean '+class_names[y_train[i]])

tf.keras.Sequential API¶

This is what we have been using so far for building our models. Its pros are: it's simple to use, it allows you to create models layer-by-layer. Its basic con is: it is not very flexible, and although it includes layers such as Merge, Concatenate, and Add that allow for a combination of models, it is difficult to make models with many inputs or shared-layers. All layers, as the name implies, are connected sequentially.

Intro to tf.keras.Functional API¶

https://www.tensorflow.org/guide/keras/functional.

In this API, layers are built as graphs, with each layer indicating to which layer it is connected. Functional API helps us make more complex models which include non-sequential connections and multiple inputs or outputs.

Let's say we have an image input with a shape of (28, 28, 1) and a classification task:

num_classes = 10

inputs = keras.Input(shape=(h, w, 1))

x = Dense(64, activation='relu')(inputs)

x = layers.Dense(64, activation='relu')(x)

outputs = Dense(num_classes, activation='softmax', name='output')(x)

ae_model = Model(inputs=inputs, outputs=outputs, name='autoencoder')

ae_model.summary()Create the Encoder¶

# input layer

input_layer = Input(shape=(h, w, 1))

- Use the Functional API

- Create a pair of layers consisting of a

Conv2Dand aMaxPoollayer which takes in ourinput_layer. Choose the number of filters. - Stack 3 of these layers, one after the other.

- Give this model the name

latent_model(it's not your final model).

# your code here

# %load solutions/exercise3.py

- repeat the structure of your encoder but in "reverse".

- What is the output layer activation function? What are the dimensions of the output?

# your code here

# %load solutions/exercise4.py

Choose an optimizer and a loss function. Use Early Stopping. To get good results you will need to run this about 20 epochs and this will take a long time depending on your machine. For the purposes of this lab run only for 2 epochs.

(Optional: add Tensorboard).

Here is how to connect the two models:

# create the model

ae_model = Model(input_layer, output_layer, name='ae_model')

ae_model.summary()

# your code here

# %load solutions/exercise5-1.py

# %load solutions/exercise5-2.py

# Let's see how our AE did

fig, ax = plt.subplots(1,1, figsize=(10,6))

ax.plot(history.history['loss'], label='Train')

ax.plot(history.history['val_loss'], label='Val')

ax.set_xlabel("Epoch", fontsize=20)

ax.set_ylabel("Loss", fontsize=20)

ax.legend()

ax.set_title('Autoencoder Loss')

# start Tensorboard - requires grpcio>=1.24.3

#%tensorboard --logdir logs

# save your model

ae_model.save_weights('ae_model.h5')

Part 3: Visualizing Intermediate Layers of AE¶

This is our "encoder" model which we have saved as:

encoder = Model(input_layer, latent_view, name='encoder_model')# your code here

# %load solutions/exercise6.py

possible answers¶

We can have bottleneck layers in convolutional autoencoders that are not dense but simply a few stacked featuremaps such as above. They might have better generalizability due to only using shared weights. One interesting consequence is that without the dense layer you'll force translational equivariance on the latent representation (a particular feature in the top right corner will appear as an activation in the top right corner of the featuremaps at the level of the bottleneck, and if the feature is moved in the original image the activation in the bottleneck will move proportionally in the same direction). This isn't necessarily a problem, but you are enforcing some constraints on the relationships between the latent space directions that you wouldn't be with the presence of a dense layer.

Visualize Samples reconstructed by our AE¶

n = np.random.randint(0,len(X_test)-5)

f, ax = plt.subplots(1,5)

f.set_size_inches(80, 40)

for i,a in enumerate(range(n,n+5)):

ax[i].imshow(X_test[a, :, :, 0].reshape(28, 28), cmap='gray')

f, ax = plt.subplots(1,5)

f.set_size_inches(80, 40)

for i,a in enumerate(range(n,n+5)):

ax[i].imshow(X_test_n[a, :, :, 0].reshape(28, 28), cmap='gray')

preds = ae_model.predict(X_test_n[n:n+5])

f, ax = plt.subplots(1,5)

f.set_size_inches(80, 40)

for i,a in enumerate(range(n,n+5)):

ax[i].imshow(preds[i].reshape(28, 28), cmap='gray')

plt.show()